Amazon Elastic Container Service now supports Amazon EFS file systems

It has only been five years since Jeff wrote on this blog about the launch of the Amazon Elastic Container Service. I remember reading that post and thinking how exotic and unusual containers sounded. Fast forward just five years, and containers are an everyday part of most developers lives, but whilst customers are increasingly adopting container orchestrators such as ECS, there are still some types of applications that have been hard to move into this containerized world.

Customers building applications that require data persistence or shared storage have faced a challenge since containers are temporary in nature. As containers are scaled in and out dynamically, any local data is lost as containers are terminated. Today we are changing that for ECS by launching support for Amazon Elastic File System (EFS) file systems. Both containers running on ECS and AWS Fargate will be able to use Amazon Elastic File System (EFS).

This new capability will help customers containerize applications that require shared storage such as content management systems, internal DevOps tools, and machine learning frameworks. A whole new set of workloads will now enjoy the benefits containers bring, enabling customers to speed up their deployment process, optimize their infrastructure utilization, and build more resilient systems.

Amazon Elastic File System (EFS) provides a simple, scalable, elastic, fully managed shared file system; it means that you can store your data separately from your compute. It is also a regional service, meaning that the service is storing data within and across 3 Availability Zones for high availability and durability.

Until now, it was possible to get EFS working with ECS if you were running your containers on a cluster of EC2 instances. However, if you wanted to use AWS Fargate as your container data plane then, prior to this announcement, you couldn’t mount an EFS file system. Fargate does not allow you as a customer to gain access to the managed instances inside the Fargate fleet and so you are unable to make the modifications required to the instances to setup EFS.

I’m sure many of our customers will be delighted that they now have a way of connecting EFS file systems easily to ECS, personally I’m ecstatic that we can use this new feature in combination with Fargate, it will be perfect for a little side project that I am currently building and finally give us a way of having persistent storage work in combination with serverless containers.

There is a good reason both ECS and EFS include the word Elastic in their name as both of these services can scale up and down as your application requires. EFS scales on demand from zero to petabytes with no disruptions, growing and shrinking automatically as you add and remove files. With ECS there are options to use either Cluster Auto Scaling or Fargate to ensure that your capacity grows and shrinks to meet demand. For you, our customer, this means that you are only ever paying for the storage and compute that you are actually going to use.

So, enough talking, let’s get to the fun bit and see how we can get a containerized application working with Amazon Elastic File System (EFS).

A Simple Shared File System Example

For this example, I have built some basic infrastructure, so I can show you the before and after effect of adding EFS to a Fargate cluster. Firstly I have created a VPC that spans two Availability Zones. Secondly, I have created an ECS cluster. On the ECS Cluster, I plan to run two containers using Fargate, and this means that I don’t have to set up any EC2 instances as my containers will run on the Fargate fleet that is managed by AWS.

To deploy my application, I create a Task Definition that uses a Docker Image of an Open Source application called Cloud Commander, which is a simple drag and drop, file manager.

In the ECS console, I create a service and use the Task Definition that I created to deploy my application. Once the service is deployed, and the containers are provisioned, I head over to the Application Load Balancer URL which was created as part of my service, and I can see that my application appears to be working. I can drag a file to upload it to the application.

However there is a problem. If I refresh the page, occasionally, the file that I dragged to upload disappears. This happens because I have two containers running my application, and they are both using their local file systems. As I refresh my browser, the load balancer sends me to one of the two containers, and only one of the containers is storing the image on its local volume.

What I need is a shared file system that both the containers can mount and to which they can both write files.

Next, I create a new file system inside the EFS console. In the wizard, I choose the same VPC that I used when I created my ECS cluster and select all of the availability zones that the VPC spans and ask the service to create mount targets in each one. These mount targets will mean that containers that are in different Availability Zones will still be able to connect to the file system.

I select the defaults for all the other options in the wizard. In Step 3, I click the button to Add an access Point. An access point is a way of me giving a particular application access to the file system and gives me incredibly granular control over what data my application is allowed to access. You can add multiple Access points to your EFS file system and provide different applications, different levels of access to the same file system.

The application I am deploying will handle user uploads for my web site, so I will create an EFS Access Point that gives this application full access to the /uploads directory, but nothing else. To do this, I will create an access point with a new User ID (1000) and Group ID (1000), and a home directory of /uploads. The directory will be created with this user and group as the owner with full permissions, giving read permissions to all other users.

Security is the number one priority at AWS and the team have worked hard to ensure ECS integrates with EFS to provide multiple layers of security for protecting EFS filesystems from unauthorized access, including IAM role-based access control, VPC security groups, and encryption of data in transit.

After working through the wizard, my file system is created, and I’m given a File system ID and an Access point ID. I will need these IDs to configure the task definitions in Fargate.

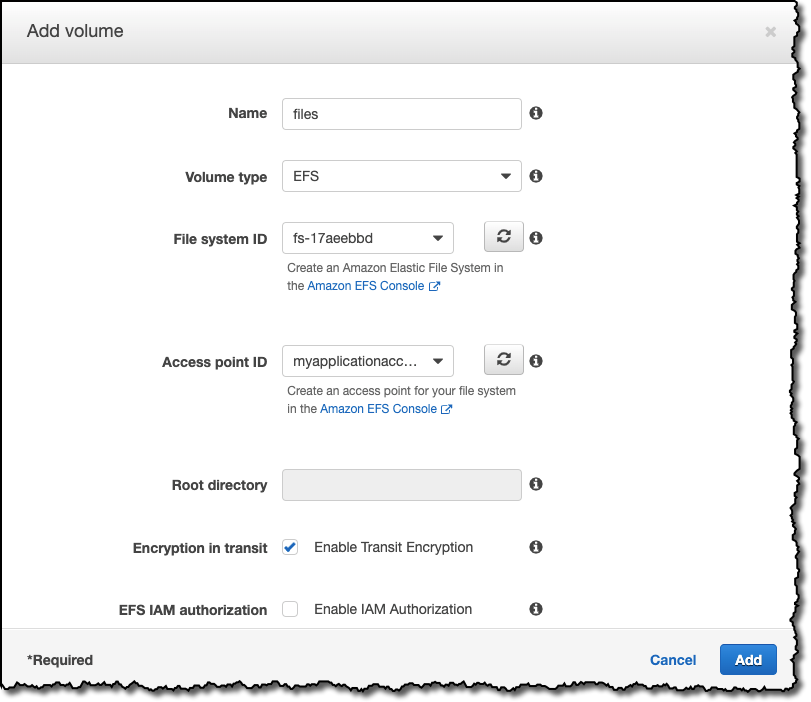

I go back to the Task Definition inside my ECS Cluster and create a new revision of the Task Definition. I scroll down to the Volumes section of the definition and click Add volume.

I can then add my EFS File System details, I select the correct File system ID and also the correct Access point ID that I created earlier.

I have opted to Enable Encryption in transit, but for this example I have not enabled EFS IAM authorization which would be helpful in a larger application with many clients requiring different levels of access for different portions of a filesystem. This feature can simplify management by using IAM authorization, if you want more details on this check out the blog we wrote when the feature launched earlier in the year.

Now that I have updated my task definition, I can update my ECS service to use this new definition. It’s also essential here to make sure that I set the platform version to 1.4.0.

The service deploys my two new containers and decommissions the old two. The new containers will now be using the shared EFS file system, and so my application now works as expected.

If I upload files and then revisit the application, my files will still be there. If my containers are replaced or are scaled up or down, the file system will persist.

Looking to the Future

I am loving the innovation that has been coming out of the containers teams recently, and looking at their public roadmap; they have some really exciting plans for the future. If you have ideas or feature requests, make sure you add your voice to the many customers that are already guiding their roadmap.

The new feature is available in all regions where ECS and EFS are available and comes at no additional cost. So, go check it out in the AWS console and let us know what you think.

Happy Containerizing

Source: AWS News