Amazon Prime Day 2019 – Powered by AWS

What did you buy for Prime Day? I bought a 34″ Alienware Gaming Monitor and used it to replace a pair of 25″ monitors that had served me well for the past six years:

As I have done in years past, I would like to share a few of the many ways that AWS helped to make Prime Day a reality for our customers. You can read How AWS Powered Amazon’s Biggest Day Ever and Prime Day 2017 – Powered by AWS to learn more about how we evaluate the results of each Prime Day and use what we learn to drive improvements to our systems and processes.

This year I would like to focus on three ways that AWS helped to support record-breaking amounts of traffic and sales on Prime Day: Amazon Prime Video Infrastructure, AWS Database Infrastructure, and Amazon Compute Infrastructure. Let’s take a closer look at each one…

Amazon Prime Video Infrastructure

Amazon Prime members were able to enjoy the second Prime Day Concert (presented by Amazon Music) on July 10, 2019. Headlined by 10-time Grammy winner Taylor Swift, this live-streamed event also included performances from Dua Lipa, SZA, and Becky G.

Live-streaming an event of this magnitude and complexity to an audience in over 200 countries required a considerable amount of planning and infrastructure. Our colleagues at Amazon Prime Video used multiple AWS Media Services including AWS Elemental MediaPackage and AWS Elemental live encoders to encode and package the video stream.

The streaming setup made use of two AWS Regions, with a redundant pair of processing pipelines in each region. The pipelines delivered 1080p video at 30 fps to multiple content distribution networks (including Amazon CloudFront), and worked smoothly.

AWS Database Infrastructure

A combination of NoSQL and relational databases were used to deliver high availability and consistent performance at extreme scale during Prime Day:

Amazon DynamoDB supports multiple high-traffic sites and systems including Alexa, the Amazon.com sites, and all 442 Amazon fulfillment centers. Across the 48 hours of Prime Day, these sources made 7.11 trillion calls to the DynamoDB API, peaking at 45.4 million requests per second.

Amazon Aurora also supports the network of Amazon fulfillment centers. On Prime Day, 1,900 database instances processed 148 billion transactions, stored 609 terabytes of data, and transferred 306 terabytes of data.

Amazon Compute Infrastructure

Prime Day 2019 also relied on a massive, diverse collection of EC2 instances. The internal scaling metric for these instances is known as a server equivalent; Prime Day started off with 372K server equivalents and scaled up to 426K at peak.

Those EC2 instances made great use of a massive fleet of Elastic Block Store (EBS) volumes. The team added an additional 63 petabytes of storage ahead of Prime Day; the resulting fleet handled 2.1 trillion requests per day and transferred 185 petabytes of data per day.

And That’s a A Wrap

These are some impressive numbers, and show you the kind of scale that you can achieve with AWS. As you can see, scaling up for one-time (or periodic) events and then scaling back down afterward, is easy and straightforward, even at world scale!

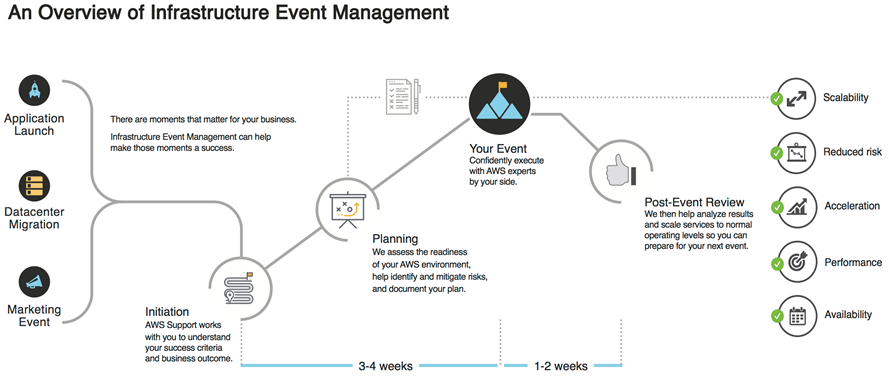

If you want to run your own world-scale event, I’d advise you to check out the blog posts that I linked above, and also be sure to read about AWS Infrastructure Event Management. My colleagues are ready (and eager) to help you to plan for your large-scale product or application launch, infrastructure migration, or marketing event. Here’s an overview of their process:

— Jeff;

Source: AWS News