S3 Replication Update: Replication SLA, Metrics, and Events

S3 Cross-Region Replication has been around since early 2015 (new Cross-Region Replication for Amazon S3), and Same-Region Replication has been around for a couple of months.

S3 Cross-Region Replication has been around since early 2015 (new Cross-Region Replication for Amazon S3), and Same-Region Replication has been around for a couple of months.

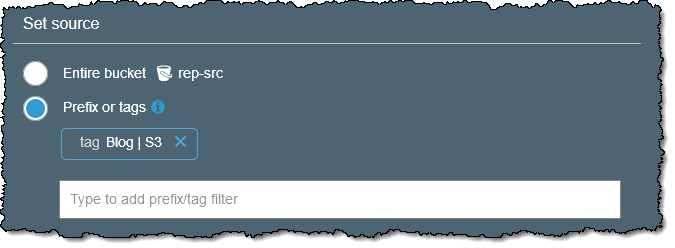

Replication is very easy to set up, and lets you use rules to specify that you want to copy objects from one S3 bucket to another one. The rules can specify replication of the entire bucket, or of a subset based on prefix or tag:

You can use replication to copy critical data within or between AWS regions in order to meet regulatory requirements for geographic redundancy as part of a disaster recover plan, or for other operational reasons. You can copy within a region to aggregate logs, set up test & development environments, and to address compliance requirements.

S3’s replication features have been put to great use: Since the launch in 2015, our customers have replicated trillions of objects and exabytes of data! Today I am happy to be able to tell you that we are making it even more powerful, with the addition of Replication Time Control. This feature builds on the existing rule-driven replication and gives you fine-grained control based on tag or prefix so that you can use Replication Time Control with the data set you specify. Here’s what you get:

Replication SLA – You can now take advantage of a replication SLA to increase the predictability of replication time.

Replication Metrics – You can now monitor the maximum replication time for each rule using new CloudWatch metrics.

Replication Events – You can now use events to track any object replications that deviate from the SLA.

Let’s take a closer look!

New Replication SLA

S3 replicates your objects to the destination bucket, with timing influenced by object size & count, available bandwidth, other traffic to the buckets, and so forth. In situations where you need additional control over replication time, you can use our new Replication Time Control feature, which is designed to perform as follows:

- Most of the objects will be replicated within seconds.

- 99% of the objects will be replicated within 5 minutes.

- 99.99% of the objects will be replicated within 15 minutes.

When you enable this feature, you benefit from the associated Service Level Agreement. The SLA is expressed is terms of a percentage of objects that are expected to be replicated within 15 minutes, and provides for billing credits if the SLA is not met:

- 99.9% to 98.0% – 10% credit

- 98.0% to 95.0% – 25% credit

- 95% to 0% – 100% credit

The billing credit applies to a percentage of the Replication Time Control fee, replication data transfer, S3 requests, and S3 storage charges in the destination for the billing period.

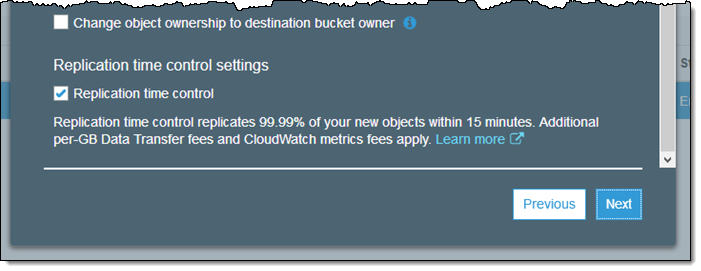

I can enable Replication Time Control when I create a new replication rule, and I can also add it to an existing rule:

Replication begins as soon as I create or update the rule. I can use the Replication Metrics and the Replication Events to monitor compliance.

In addition to the existing charges for S3 requests and data transfer between regions, you will pay an extra per-GB charge to use Replication Time Control; see the S3 Pricing page for more information.

Replication Metrics

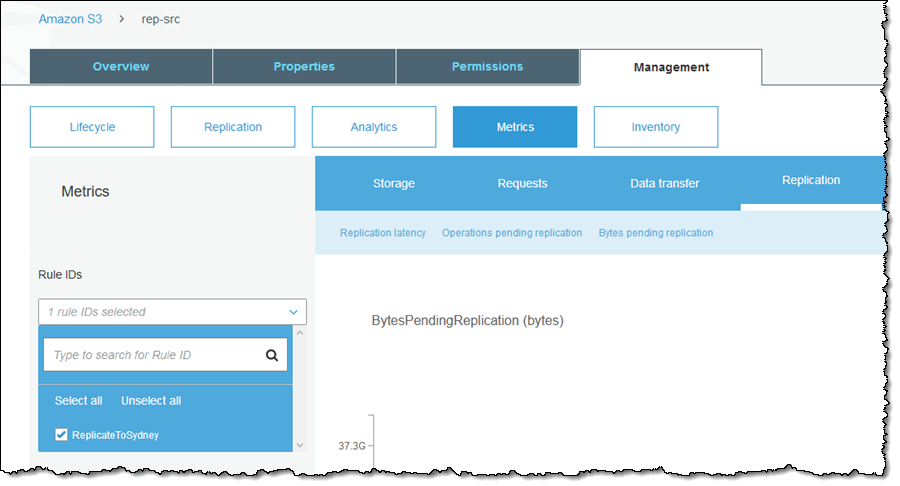

Each time I enable Replication Time Control for a rule, S3 starts to publish three new metrics to CloudWatch. They are available in the S3 and CloudWatch Consoles:

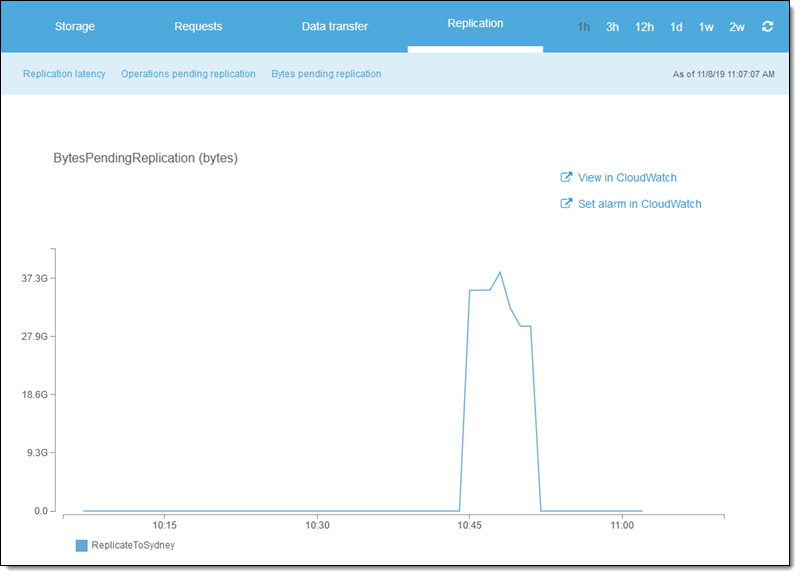

I created some large tar files, and uploaded them to my source bucket. I took a quick break, and inspected the metrics. Note that I did my testing before the launch, so don’t get overly concerned with the actual numbers. Also, keep in mind that these metrics are aggregated across the replication for display, and are not a precise indication of per-object SLA compliance.

BytesPendingReplication jumps up right after the upload, and then drops down as the replication takes place:

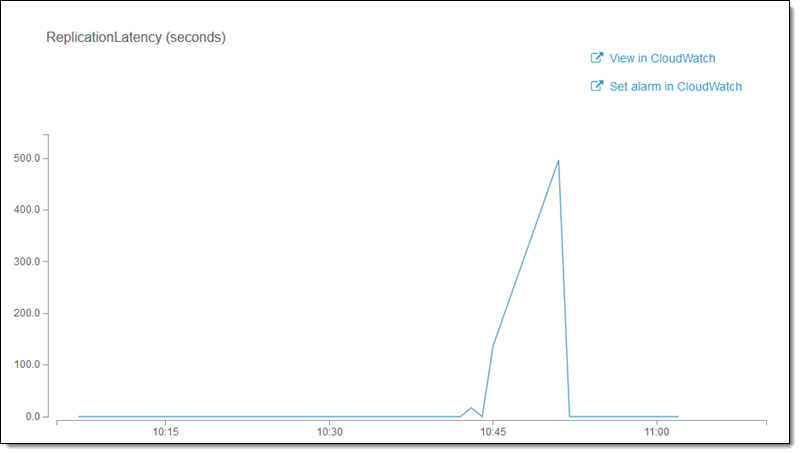

ReplicationLatency peaks and then quickly drops down to zero after S3 Replication transfers over 37 GB from the United States to Australia with a maximum latency of 8.3 minutes:

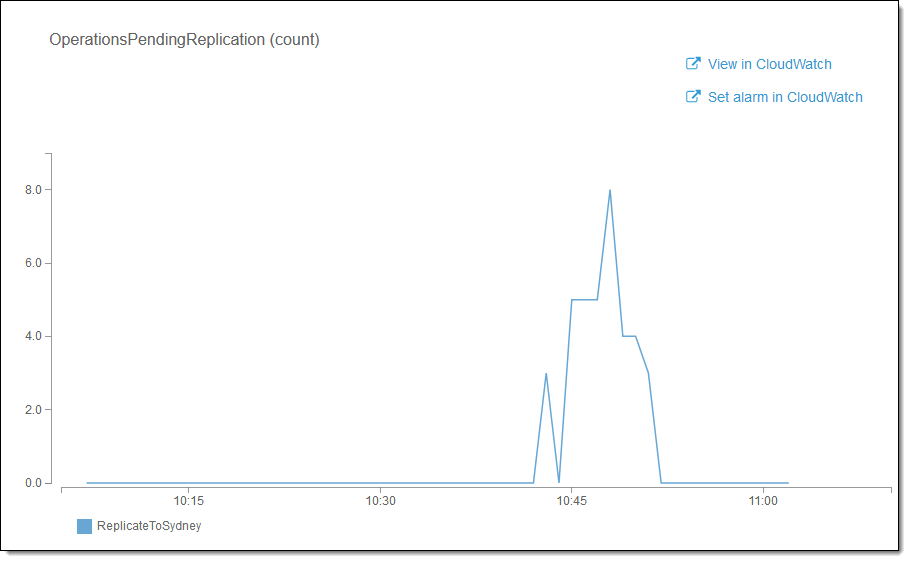

And OperationsPendingCount tracks the number of objects to be replicated:

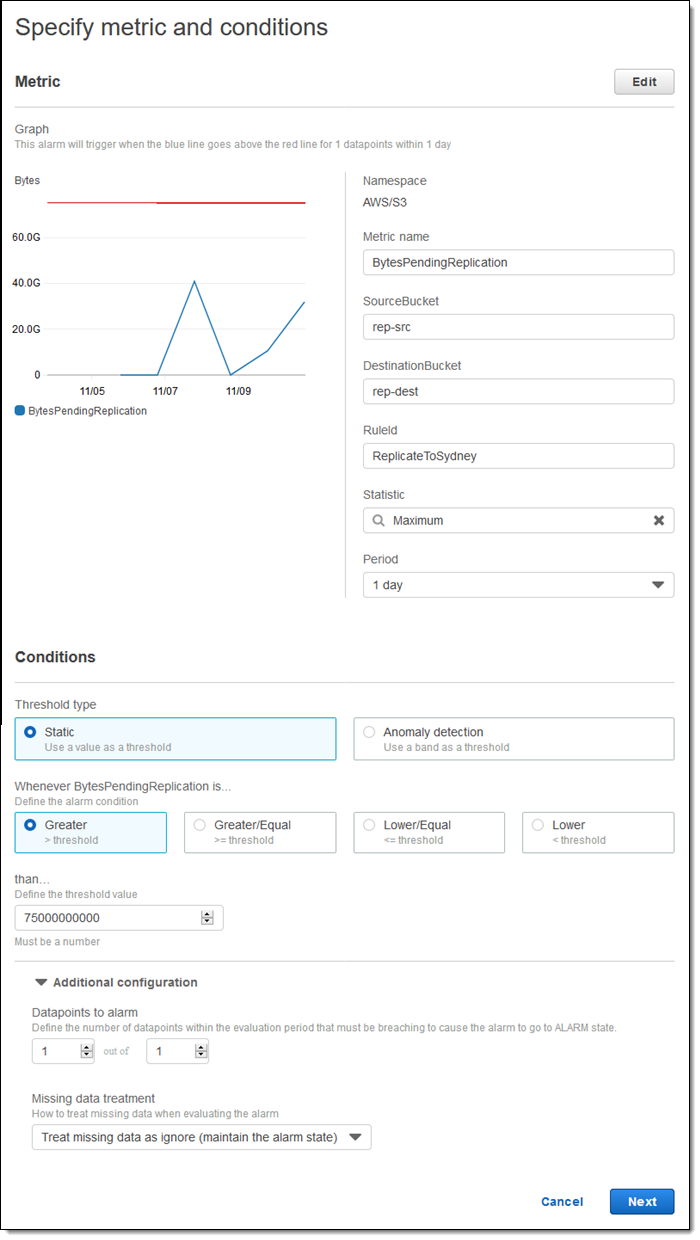

I can also set CloudWatch Alarms on the metrics. For example, I might want to know if I have a replication backlog larger than 75 GB (for this to work as expected, I must set the Missing data treatment to Treat missing data as ignore (maintain the alarm state):

These metrics are billed as CloudWatch Custom Metrics.

Replication Events

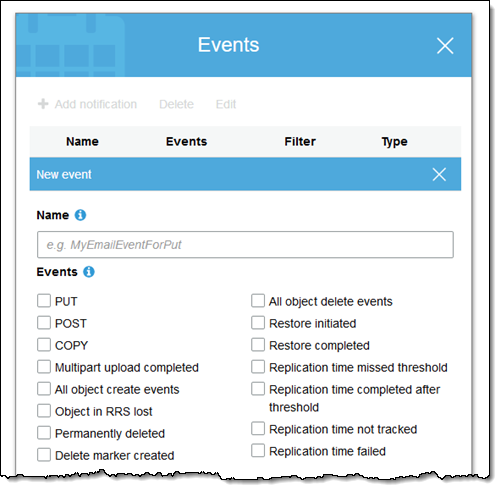

Finally, you can track replication issues by setting up events on an SQS queue, SNS topic, or Lambda function. Start at the console’s Events section:

You can use these events to monitor adherence to the SLA. For example, you could store Replication time missed threshold and Replication time completed after threshold events in a database to track occasions where replication took longer than expected. The first event will tell you that the replication is running late, and the second will tell you that it has completed, and how late it was.

To learn more, read about Replication.

Available Now

You can start using these features today in all commercial AWS Regions, excluding the AWS China (Beijing) and AWS China (Ningxia) Regions.

— Jeff;

PS – If you want to learn more about how S3 works, be sure to attend the re:Invent session: Beyond Eleven Nines: Lessons from the Amazon S3 Culture of Durability.

Source: AWS News